I think you have found your workaround

To my knowledge that’s partly correct.

I was always talking about working profiles, with no conversions in the middle, but just working with color spaces and pixel values.

As I said my maths are pretty simple, and each pixel is just a set of 3 numbers, one for each primary color. If some algorithm changes those values, I end up with a pixel with 3 new values, that’s it.

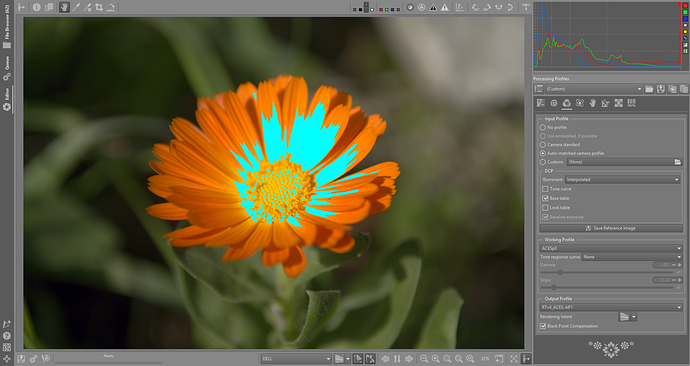

Then it happens that those 3 values should represent a visible color, and here start the problems, because if those values are outside the boundaries of the chosen color space, then that color doesn’t exist in that color space. But what happens if one tool throws a color outside the gamut and the next one brings it back inside? If after the first tool the pixel gets clipped, then the next one can’t bring back information (details).

To me, the fact that a pixel is out of gamut while in the working space is not a problem. That’s just maths. And when the image gets converted into the output color space, then all the unreal or clipped colors will be discarded, but not before the full processing has been finished.

In this sense, if I choose ACES P0 as my working profile, and while I’m within the working profile, that part of the color space that are not real colors to human eyes is still useful, because while inside the gamut of the working profile I can work with pixel values that mathematically will be correct, and only while exporting to the final image I will be worried about unreal or out of gamut colors. In the end, if I export an image into sRGB, there will be plenty of ACES P0 colors that will fall outside the sRGB gamut, but that is another problem, that’s the output problem, not the working profile gamut problem.

But as suggested before, I’m most likely wrong, and have an urgent need to update my maths and fully understand what happens with those 3 axis ICC profiles, and working with highly saturated colors, and so on. So I have some reading to do…

now we are going beyond my knowledge

now we are going beyond my knowledge