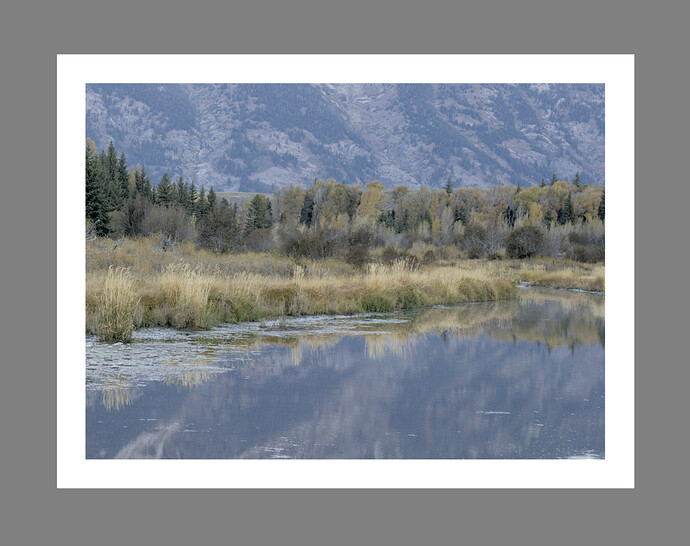

Hello, AgX is awesome!

I have played around with it for some time, and I’m starting to get a good feel for what each slider does to my picture.

I’m able to get nice-looking results relatively fast.

But I’m also super curious and want to understand how this works and why, on a technical level, and not only as a vibe-based user.

One of my vibe-based observations is that the AgX module seems to make the exposure module unnecessary.

Pressing the auto-tune level picker and then adjusting “pivot relative exposure” seems to have (almost exactly?) the same effect as applying exposure compensation in one module before.

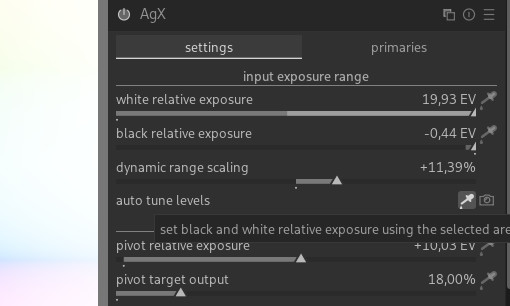

This brings me to the next and main question: what does black/white relative exposure in EV actually mean?

I’m aware of EV as a logarithmic unit, Exposure Value.

1 EV = 1 stop = doubling of light.

But the “relative” part makes me (over)think this. Relative to what?

The tooltip and the manual refer to this as “relative exposure above middle gray.”

OK, so I assume “middle gray” is fixed at 18%?

If so, 18% of the raw file? (max.) RGB value? Luminance value?

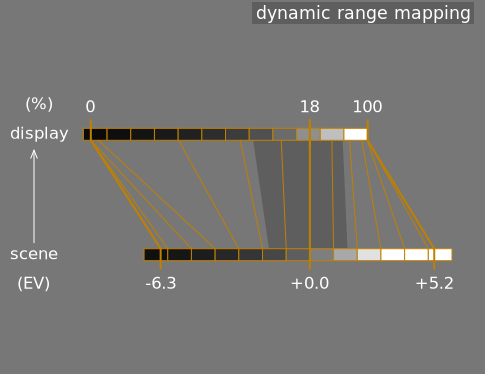

But to know (calculate) what middle gray or 18% is, one needs to know what 0 and what 100% are.

Saying 18% or “the middle” implies the upper and lower bounds must already be known.

So then why do I need to manually or automatically adjust the slider?

As a creative control, I see the point of “clipping” highlights or “crushing” blacks.

But in these cases, would it not make sense to make the slider relative to the black and white points?

For example, set the black point to 1% and the white point to 99%,

or the white point to −1% from 100% and the black point to +1% from zero?

In any case, I would love to see the clipping/crushing point of highlights and blacks from this slider in a working color space–referred histogram/scope ![]()

The last question is: why is the “default” dynamic range of this module more than my camera or a 14-bit linear raw file can deliver?

Is there dynamic range gain because of white balance coefficient gains?

Thanks for reading and any help I hope someone can point me in the right direction to understand this better.