Hello,

I often have some weird results by aligning/stacking moon .SER using latest Siril.

I’d like to get confirmation that I’m correctly using the software as I can get better results using a Python script that I developed.

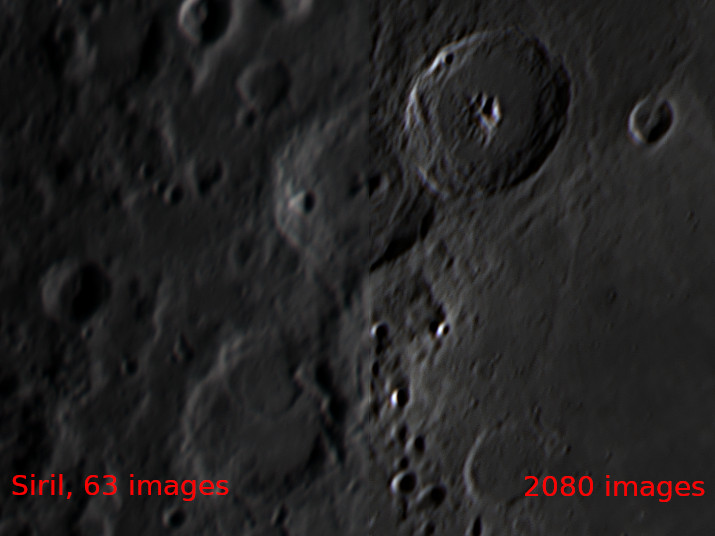

Using a ~3300 images SER of the moon, I keep 2080 images and I “apply image pattern align”:

When I use the same area as a template to OpenCV2’s matchTemplate applying TM_CCORR_NORMED, I got this result with same 2080 images:

Well, sorry, latest image has been modified through wavelets, but what seems significant to me is that all images have been aligned correctly here.

I tried to change alignment parameters in Siril, but I can’t come out with such results with my test. Any suggestions?

1 Like

Hello. Siril is for now not really dedicated to moon, solar and planetary registration.

I would advise using Planetary System Stacker which is free too.

Then siril is well suited for wavelets.

Thanks @lock042!

Indeed, wavelets in Siril are great. I did use PSS but I then stopped this to try only Siril.

I’ll try PSS over same data set to compare results.

According you, would it be interesting to propose a new alignment algorithm in Siril to propose users a more accurate stacking of planetary images?

I feel like that I could try to integrate “my algorithm” within Siril, maybe by trying to understand and then kind of inspiring from register_shift_dft() and stuff around. But I’m afraid I don’t have enough spare time to browse and understand Siril’s code base.

Following code gives general principles of my idea:

-

I hope this may help me to get some feedback about new alignment algorithm - as I’m really noob in image’s analysis, I might miss important dimensions and propose a dead-end way here, or something that couldn’t be often usable/accurate,

-

Also, if code below seems relevant, this might help me getting some kind of support from some Siril’s devs.

/* assuming

Mat img; // containing image loaded using for example

// imread(filename, IMREAD_COLOR );

Mat pattern; // pattern to look after in img

// (eg. Siril's selection)

*/

/* we need a temporary 'result' buffer to detect pattern location

within img */

int results_w = img.cols - templ.cols + 1;

int results_h = img.rows - templ.rows + 1;

result.create( results_h, results_w, CV_32FC1 );

/* this method from OpenCV is the key; it detects pattern's location in img

My Python script uses patterns converted as grey levels' images,

but results seems good using color pattern images too. */

matchTemplate( img, pattern, result, TM_CCORR_NORMED);

/* few variables used to analyse result just computed */

double ignored1;

double score;

Point ignored2;

Point matchLoc;

/* score would be the matching score of pattern within img [0 .. 1.0]

matchLoc.x and matchLoc.y the coordinates of pattern within img. */

minMaxLoc( result, &ignored1, &score, &ignored2, &matchLoc, Mat() );

/* at this point, infering that we first compute matchLoc on reference image,

we can then compute it for all other images and set shifts in x/y of the pattern, relatively to

reference image, whenever score>=0.9 for example, as in ecc.cpp's findTransform() */

Hello.

All contributions are welcomed.

However, for planetary stacking. I think that a simple algorithm (by simple I mean, working on the all images at time) won’t work. Indeed, we need to use a multipoint stacking algorithm.

This is how PSS and AS!3 are doing. It helps to “fight” the atmosphere effect.

This is why we need a huge refactoring of the planetary part in Siril.

Of course, if you want to try it, PSS has a free documentation that explains all the steps used.

Cheers,

Well, it seems that simple thing around cv::matchTemplate() using TM_CORR_NORMED method is giving quite decent results. It has given decent results to me, at least.

For example, using Siril, on 3300 images’ SER I was referring to, Siril can stack only 63 images. I’ve rejected worst images, and stacked all of the 2080 images using this ’matchTemplate() method’.

Indeed, but it would be interesting to process same data with PSS. And I think that PSS will win.

Could you make a test to see differences?

Thanks again for the tip about matchTemplate

Which algorithm did you used in SIril?

I tried both Image Pattern Alignment and ECC with comparable results (see first screenshot in the post).

I didn’t mention but I gave a chance to PSS too. Yet, it was not able to handle the 2080 images it seems and just crashed (I only have 8GB RAM). I will retry this and keep you informed.

Indeed, PSS just saturates progressively swap, and spawns messages as:

[...]

Next alignment rectangle tried: 44<y<946, 713<x<2051

15-37-27.1 Warning: No valid shift computed at frame 2222, will try another stabilization patch

Next alignment rectangle tried: 1848<y<2750, 2720<x<4058

15-37-58.2 Warning: No valid shift computed at frame 2222, will try another stabilization patch

Next alignment rectangle tried: 1397<y<2299, 44<x<1382

15-38-29.5 Warning: No valid shift computed at frame 2222, will try another stabilization patch

Next alignment rectangle tried: 946<y<1848, 44<x<1382

15-39-00.7 Warning: No valid shift computed at frame 2222, will try another stabilization patch

Next alignment rectangle tried: 495<y<1397, 44<x<1382 [...]```

Could you share your video plz?

Sure, but it’s about 37GB. Any idea for sharing? I don’t have WeTransfer “upgrade” (needed for files >= 2GB).

Ouch. It’s a bit huge.

Increase the stabilisation search width and maybe the stabilisation patch size in PSS preferences.

It could help.

You were right; increasing those parameters allowed me to get an image.

Computation time was about 20 times the one of matchTemplate().

I don’t see many visible differences in quality, except some strange artefacts.

Here is part of PSS stacked image.

I think I managed to integrate my algorithm within Siril. Got same results than with my Python script on the (very few) SER files I kept there.

I think I’ll propose a patch over 1.1.x master when I’ll have sanitized a bit my code there.

Also, I might have few questions later regarding registration.c and ecc.cpp (as I feel like some computations/allocation, repeated for each images, could be avoided). As far as I used ECC as an “template”, I might need some support to mimic it correctly (eg. not copying non-optimal design if any, not trying to optimize and miss some points in the process).

1 Like

I’d like to get short movies or even TIF/FITS if anyone wants to share. This could help me in testing algorithm.

Yep. And I’ve commented it. Thanks a lot.