With Siril, I’ve managed to get my concept tested. I my HDD head crashed last year, so I’ve lost all my raw subs. If anyone has OSC or DSLR data, preferably all ready in a Siril project they would be happy for me to test on, that would be great!

Here is the process:

FALD — CFA-Luminance Drizzle

A RAW-domain luminance extraction technique for OSC astrophotography

Author: Shaun Slade (2025)

Version: 1.0

Abstract

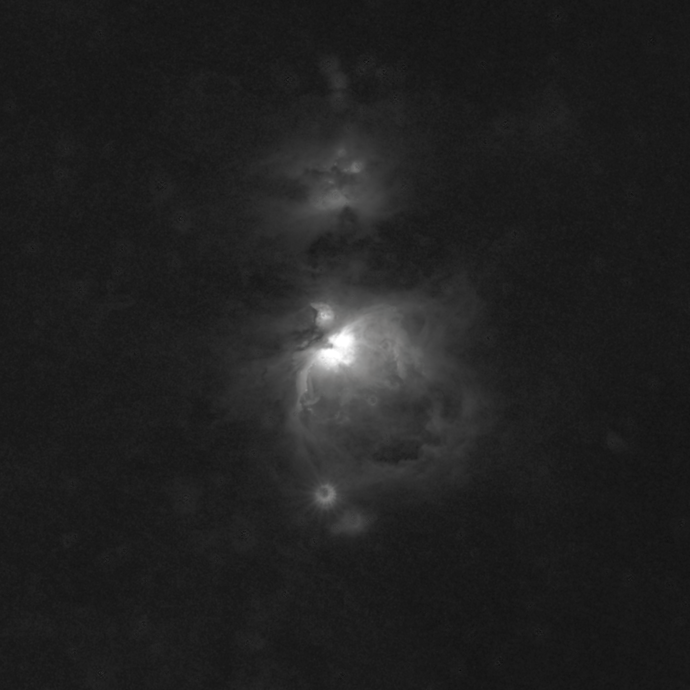

CFA-Luminance Drizzle (CFALD) is a novel processing technique for one-shot colour (OSC) astrophotography that extracts a high–signal-to-noise-ratio luminance channel directly from the RAW Bayer CFA before demosaicing. By averaging each native 2×2 RGGB block into a single luminance value and mapping it back into a 2×2 region, CFALD preserves full-resolution image dimensions while achieving true binning-level noise reduction. Drizzle integration then reconstructs subpixel detail lost in the averaging step, particularly with dithering. The resulting luminance frame behaves similarly to a dedicated mono L exposure and can be combined with the same interpolated stacked RGB for a full LRGB workflow using only OSC data.

- Method Overview

CFALD operates purely in the RAW domain, prior to interpolation, colour mixing, or debayering.

This provides cleaner noise characteristics than any synthetic luminance derived from RGB images.

1.1 CFA-to-Luminance Mapping

Each 2×2 Bayer block contains one R, one B, and two G samples. CFALD computes:

L=R+G1+G2+B4L = \frac{R + G_1 + G_2 + B}{4}L=4R+G1+G2+B

This luminance value is then written back into the same 2×2 region, producing a full-resolution frame composed of uniform 2×2 luminance tiles.

This step preserves:

registration compatibility

star profile geometry

stack alignment consistency

drizzle subpixel offsets

while still giving the noise reduction of a true 2×2 bin.

- Drizzle Reconstruction

Because each subframe is naturally offset (or deliberately dithered), drizzle integration can recover resolution normally lost to averaging. Drizzle reassigns the luminance values onto a finer sampling grid, providing:

improved detail retention

smoother low-SB gradients

reduced fixed-pattern noise

faithful reconstruction of subpixel structure

The result is a full-resolution, high-SNR luminance master.

- Workflow Summary

Load RAW CFA frames.

For each 2×2 block, compute luminance:

L=R+G1+G2+B4L = \frac{R + G_1 + G_2 + B}{4}L=4R+G1+G2+B

and write L back into the corresponding 2×2 region.

Register all luminance subs.

Drizzle-integrate the luminance stack.

Stack RGB separately (standard debayered workflow), using the same RGB subs (like superluminance).

Combine CFALD L with RGB using an LRGB blend, typically with star protection.

- Practical Results

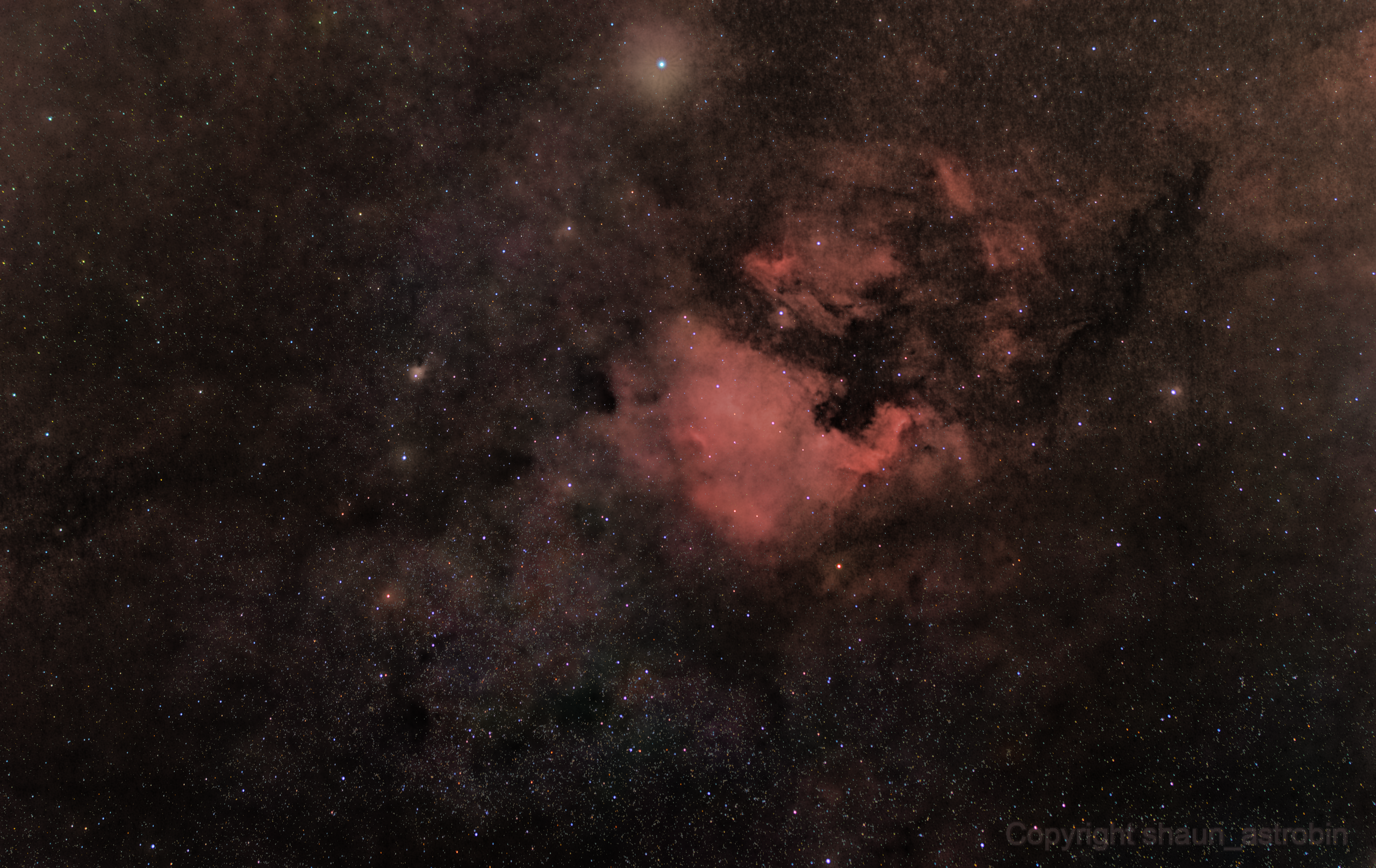

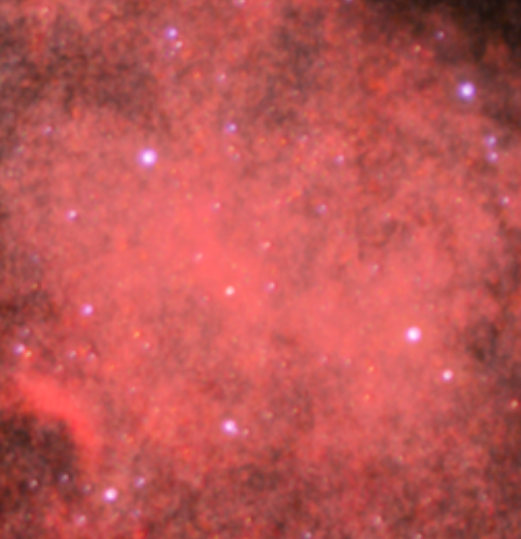

Testing on DSLR OSC datasets shows:

~1.5–1.8× practical SNR gain over debayered RGB luminance

visibly improved dust-lane structure

fainter galaxy halos recoverable without chroma noise

higher stretch tolerance

reduced fixed-pattern noise (even without dithering)

LRGB behaviour similar to mono + RGB workflows

- Limitations and Considerations

Best results achieved with dithering (enables optimal drizzle reconstruction).

Flats and biases must be applied before CFALD extraction.

Very undersampled data may show block artifacts before drizzle.

Works best on galaxy and nebula structure; star cores should remain RGB-only.

Conclusion

CFALD enables OSC users to generate a true luminance channel directly from RAW sensor data, achieving mono-like luminance behaviour without a dedicated mono camera. This technique meaningfully enhances faint-structure detectability and noise performance using the same acquisition time, offering a new LRGB processing workflow for OSC imagers.

December 8th 2025