First and foremost, I want to make sure everyone knows that I’m not trying to ruffle any feathers. This community is amazing and as a newcomer I don’t want to come in acting like I know better than everyone else. My background is in commercial photography, where precision is king - but I don’t claim to be a software engineer, or have a PhD in light/color science.

While I have very limited use of Sigma cameras, I would be shocked if this were the case, especially since you were actually posting different matrix sets.

I’m trying to use the vocabulary consistent with the manual, but this is essentially just a fancy way of saying auto white balance. In order for AWB to work, it analyzes the information provided by the sensor and makes an intelligent conclusion as to what the condition is. HOW it does this is a different topic altogether and is well above my pay grade, but I’m assuming its some type of fancy averaging system.

I plan on diving deeper into this paper, but a quick scan shows its pointing out exactly what I’m saying.

So at minimum it would provide better masking ability in the pipeline pre-calibration, but to me its more about clean accurate color, which in my eyes is a huge part of why we all shoot raw in the first place.

Diving even deeper into the way darktable functions I continue to get confused… just when I think I’ve figured it out, I find myself back at the front door lol.

Looking at my exif data from my Zf I noticed it actually doesn’t provide channel multipliers for D65, but it does provide the exact multipliers from its assessment.

jp@darkhorse:~$ exiftool -WhiteBalance* -WB* -ColorTemp* -ALL '/media/jp/NIKON Z F/DCIM/101NCZ_F/_DSC9009.NEF' | grep -i "balance\|temp\|calib"

White Balance : Auto1

White Balance Fine Tune : 0 0

Color Temperature Auto : 3250

White Balance : Auto1

White Balance Fine Tune : 0 0

Color Temperature Auto : 3250

Color Balance Version : 0803

Blue Balance : 2.15625

Red Balance : 1.351563

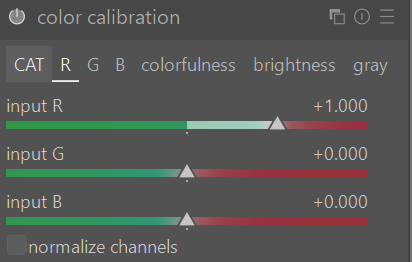

My last post/impression was that it used standard information in the exif to scale to D65. This no longer seems to be possible in my eyes, and it also half-way explains to me why when you toggle between camera reference and as shot to reference you get the same results (even though the sliders/values change). The curious part is that with the calibration off, they both are quite warm in the case of this image at 3250 - even though if it was using the provided scale multipliers and nothing else, it should appear neutral like it does with the legacy “as shot” method. So at this point, I’m really not sure what is going on with the two modern options after all.

To add to the confusion, the software has this profile, which is awesome that someone is doing this work, but I’m not all too sure what the software is really doing with this information. The matrix is useful, but without knowing its calibration illuminant (D65? Something else?), it’s unclear how darktable applies it to images shot under different lighting.

<Camera make="NIKON CORPORATION" model="NIKON Z f" mode="14bit-compressed">

<ID make="Nikon" model="Z f">Nikon Z f</ID>

<CFA width="2" height="2">

<Color x="0" y="0">RED</Color>

<Color x="1" y="0">GREEN</Color>

<Color x="0" y="1">GREEN</Color>

<Color x="1" y="1">BLUE</Color>

</CFA>

<Crop x="0" y="0" width="0" height="0"/>

<Sensor black="1008" white="15892"/>

<ColorMatrices>

<ColorMatrix planes="3">

<ColorMatrixRow plane="0">11607 -4491 -977</ColorMatrixRow>

<ColorMatrixRow plane="1">-4522 12460 2304</ColorMatrixRow>

<ColorMatrixRow plane="2">-458 1519 7616</ColorMatrixRow>

</ColorMatrix>

</ColorMatrices>

</Camera>

At the end of the day what I’m saying is that the matrix system isn’t EVIL, but it feels a bit like working on display information when we could in theory be working on scene-referred data. Once we step past demosaicing the image we’re trying to force a conversion that is just not as simple/linear as the math would like it to be. I know its not exactly the same as trying to fix a bad compressed jpeg, but as far as I can see this essentially is still what we are doing - but with a lot more wiggle-room.

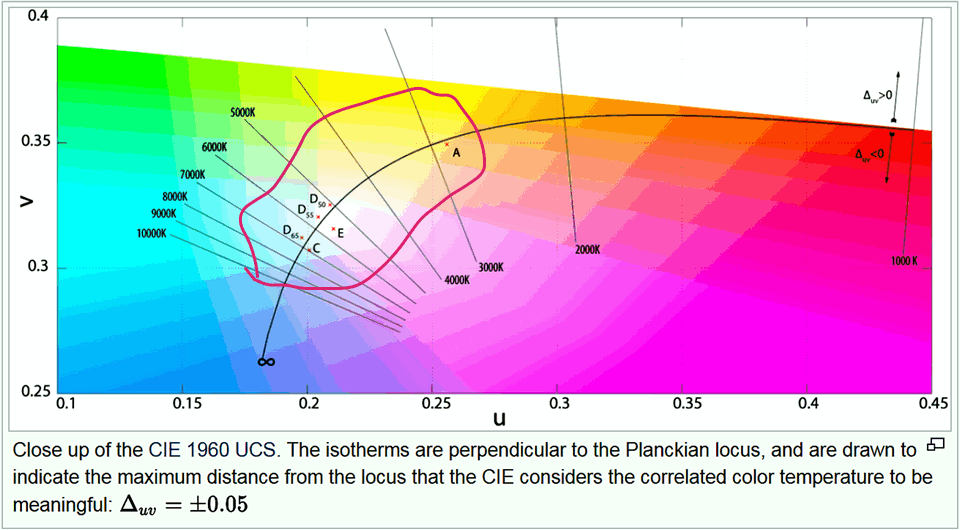

The fundamental question is: why discard the camera’s accurate scene analysis (3250K with specific multipliers) to force everything through a theoretical D65 reference, then try to fix the resulting color cast with CAT?

Also… as far as I understand D65 is/was a standard that has its roots going back to the earliest days of color displays (we’re talkin’ tubes). This day and age with modern digital displays and capture devices it seems like a weird place to standardize. Daylight is generally close to 5500K (along with flash), tungsten is 3200K, and with modern home/commercial lighting typically sits somewhere in between the two… Why not push to 4000K or something if we must take that approach? Having an illuminant at or near 6500K is actually pretty uncommon in the real world.