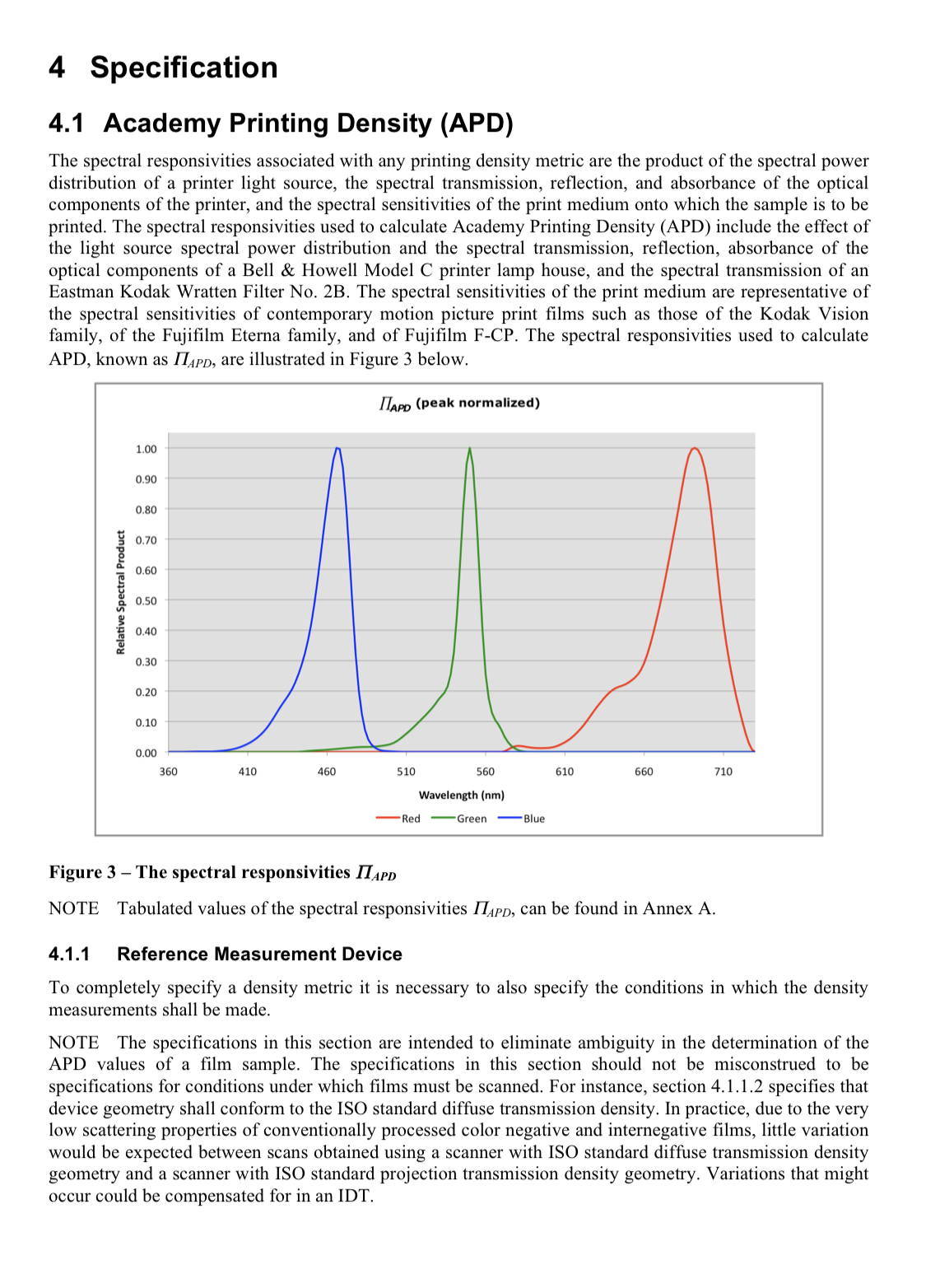

The basic problem is that film is designed to be “seen” by a combination of light source spectral emission, filter density, and paper spectral sensitivity. This specific spectral response looks something like this:

That’s based on Kodak motion picture print film, but print film has very similar responsivities to print paper. When a negative is seen by the system it was designed for and processed into the dye layers of the print medium it will automatically have technically correct color (which is often different from aesthetic intentions).

The goal in camera scanning is to conform our own light source + filtration + camera spectral response as closely as possible to the system that film is designed to be “seen” by. The Inherent problem with camera scanning though is that cameras are designed to “see” similarly to human eyes and their respond is markedly different than the film printing density response. Here you can see the spectral response of a Sony A7RII vs the approximate spectral sensitivities of a photographic print paper:

All three channels have a narrower response than the camera sensor, but the biggest difference is the spectral position of the red channel sensitivity. The peak sensitivity of the digital cameras red channel is centered in the area the paper’s lowest sensitivity, which is squarely in the middle of the wavelengths that comprise the infamous “orange mask”. To make matters worse, both the red AND green channels are highly sensitive to this range of yellow-oranges making it essentially impossible to use white balance to eliminate it. So what happens is that you end up building red channel density AND green channel density from wavelengths of light that are designed to be ignored, while very little valuable red channel density is measured due to the cameras decreasing sensitivity in the true red spectral region (650nm).

Because of this high level of red/green channel crossover in camera sensors the only way to get consistently high quality scans is to use using narrow band lighting (RGB LED and/or sharp cut filtration) to capture only the dye layer densities at the wavelengths where the sensor and film dyes each have maximum channel separation (i.e. Minimum crossover). This can be done with narrow band RGB light, or with specialized filtration over a high quality white light. It can sometimes be accomplished digitally, but not with any level of consistency. Every image would require a different level of correction. After all, how could you possibly distinguish between blues formed by the orange mask and those that were formed by a combination of the magenta and yellow dye layers? All you can do after capture is attenuate ALL blues. Some images will look ok, others won’t.

The best solution for total color separation is to take three narrow band exposures, extract the exposed color channel from each, and combine them into an RGB image. From there it’s a matter of digitally profiling to get an even closer match to the printing densities and emulating the color conversion from the paper’s spectral sensitivity to its developed dye densities. If we can do all of this the result will be consistent, nuanced, high quality scans that match the original color intent of the film. So far all the film scanning solutions I’ve seen have instead tried to use auto correction algorithms to normalize scans, with really inconsistent results.