Hi All.

My current system specs are as follows:

> [brian@Giger ~]$ inxi -F

System: Host: Giger Kernel: 5.6.16-1-MANJARO x86_64 bits: 64 Desktop: KDE Plasma 5.18.5 Distro: Manjaro Linux

Machine: Type: Desktop Mobo: ASUSTeK model: A88XM-A v: Rev X.0x serial: UEFI: American Megatrends v: 1801

date: 08/25/2014

CPU: Topology: Quad Core model: AMD A8-6600K APU with Radeon HD Graphics bits: 64 type: MCP L2 cache: 2048 KiB

Speed: 2177 MHz min/max: 1900/3900 MHz Core speeds (MHz): 1: 2177 2: 2155 3: 1895 4: 1894

Graphics: Device-1: NVIDIA GP108 [GeForce GT 1030] driver: nvidia v: 440.82

Display: x11 server: X.Org 1.20.8 driver: nvidia resolution: 2560x1440~60Hz

OpenGL: renderer: GeForce GT 1030/PCIe/SSE2 v: 4.6.0 NVIDIA 440.82

Audio: Device-1: Advanced Micro Devices [AMD] FCH Azalia driver: snd_hda_intel

Device-2: NVIDIA GP108 High Definition Audio driver: snd_hda_intel

Device-3: VIA VX1 type: USB driver: hid-generic,snd-usb-audio,usbhid

Device-4: C-Media type: USB driver: hid-generic,snd-usb-audio,usbhid

Sound Server: ALSA v: k5.6.16-1-MANJARO

Network: Device-1: Realtek RTL8111/8168/8411 PCI Express Gigabit Ethernet driver: r8169

IF: enp4s0 state: up speed: 1000 Mbps duplex: full mac: f0:79:59:6e:12:57

Drives: Local Storage: total: 1.93 TiB used: 1.16 TiB (59.9%)

ID-1: /dev/sda vendor: Kingston model: SV300S37A120G size: 111.79 GiB

ID-2: /dev/sdb vendor: Seagate model: ST1000DM003-1ER162 size: 931.51 GiB

ID-3: /dev/sdc vendor: Seagate model: ST1000DM003-1CH162 size: 931.51 GiB

Partition: ID-1: / size: 109.30 GiB used: 10.23 GiB (9.4%) fs: ext4 dev: /dev/sda2

ID-2: /home size: 916.77 GiB used: 709.18 GiB (77.4%) fs: ext4 dev: /dev/sdb1

Sensors: System Temperatures: cpu: 11.2 C mobo: N/A gpu: nvidia temp: 38 C

Fan Speeds (RPM): N/A gpu: nvidia fan: 0%

Info: Processes: 221 Uptime: 16h 19m Memory: 15.58 GiB used: 3.96 GiB (25.4%) Shell: bash inxi: 3.0.37

I’ve been having slow exports from darktable, and running darktable from the command line with darktable -d opencl, gives the following error:

728.899903 [pixelpipe_process] [export] using device 0

730.234856 [guided filter] unknown error: -4

730.623625 [guided filter] fall back to cpu implementation due to insufficient gpu memory

[export_job] exported to `/home/brian/Desktop/IMG_5070_01.jpg’

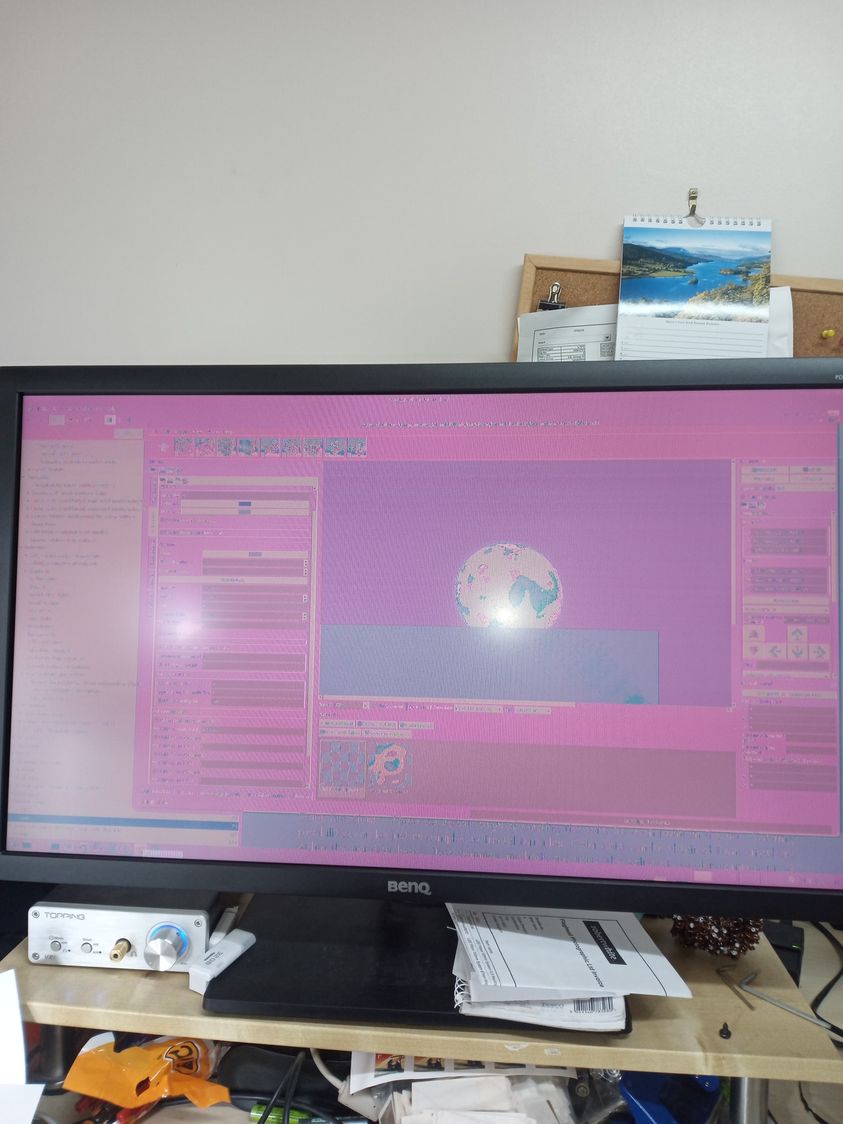

So I suspect I’m running out of memory on the GPU (a 2GB Nvidia GT 1030). My display is a 2560x1440 screen, so I suspect that may be using up GPU memory as well, especially since I’m running Plasma on Manjaro using the opengl compositor.

Also, the GT1030 does not support NVENC for video encoding.

So my question is this, would I see much benefit from upgrading the GPU? It is planned to give the system a complete upgrade eventually (which will mean new motherboard & ram, due to modern CPUs being different fittings ![]() )

)

If so, what would be a decent GPU for darktable / video encoding etc. I don’t game on my system, I have a PS4 for that ![]()

Also, would I get more bang for buck going for AMD rather than Nvidia?

E.G: Radeon RX 580 Pulse OC Light 8192MB GDDR5 PCI-Express Graphics Card (£180) or GeForce GTX 1660 Twin Fan 6144MB GDDR5 PCI-Express Graphics Card (£200)?

Suggestions welcome!