As a long time user and forum ‘lurker’, I am a ‘multiple instance masker’ in my use of dark table for processing my b&w.

Therefore my vote would be for a simple control as in option 1

However not adverse to what Wilecoyote wants to do and will adapt to whatever he finally decides as it is his baby (and thanks very much)

Sorry, did type ‘darktable’, that’s autocorrect for you ![]()

Don’t worry, we only get the pitchforks out when someone types lightroom ;-).

![]()

Thanks for the explanation. I had previously read the sections Martin referred to and in a general sense I could see how the difference luminance estimators once calculated would then have some different effects on color when used in the preservation math, some of which are noted but still even then I dont’ think it would ever be that I would look at an image and say, so “oh ya for this one I should use x y or z estimator”. This feeling was extended for the ability to predict the impact on the resulting mask,

It sounds like normally in this case the expected differences will be minimal so in a way that is good an maybe a vote for potential simplification or even as you have pondered just dropping the selection.

I was noting my earlier observation using the recent image of the red gate.

I had the edit to a state where there was a nice warm glow on the red and then I applied your module just to experiment. At 100% with the HQ preview on I was looking at the gate and there were features from the light on the edges and across the rungs on the gate. I was looking at some corrosion at the welds or joints and later at the grass and the tree in the background and to the right. Cycling through the estimators seemed to change the module output in some of these small sections wrt contrast and color. THe color of the red would change and there were changes in the distribution of I guess almost what you would call “micro contrast”

I think i have deleted that edit but I will go and see if I have it and then go to some other images and see if I could notice any of the same traits. I am wondering if in the end zoomed out you would ever even notice it and I suspect I may have had some strong settings in place for boost etc while trying to test the limits of the module. It could also be that if used “properly” then these percieved differences might all but vanish… I’ll do a little more playing around in case I can produce anything informative.

Thanks all for your comments wrt the estimators.

I can shim in with the following regarding the “luminance estimators”:

- Its possible to view the norms: mean, euclidian, power, and max as all being power norms with 1, 2, 3, and infinity as their respective power.

- It would make most sense to me to use the norm with the same direction as the effect that is applied based on its result. Local contrast rgb that uses rgb scaling applies the effect as a multiplication of the rgb tripplet → same direction as the euclidian norm.

Thanks so much for taking the time to comment…that is great context to have…

Hello,

Thank you for your analysis

. It is very constructive, and you perfectly summarize why your single-scale approach is so effective: it is a surgical scalpel with total precision and efficiency.

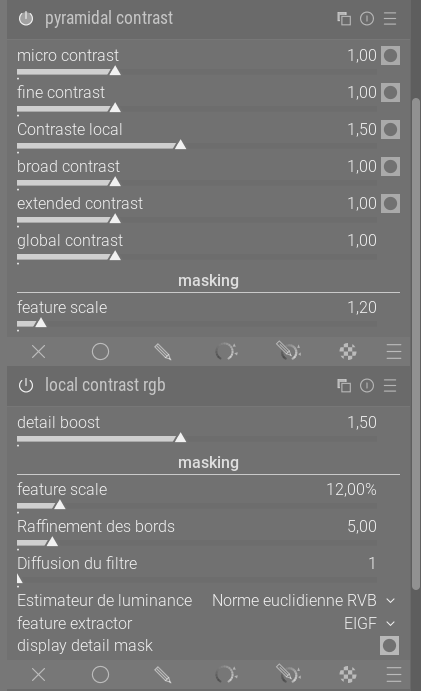

To complement this, I have developed a variant using your engine, “the heart of your work,” but with a multi-frequency modeling approach. The idea is to offer a “mixing console” that allows you to balance five frequency bands in parallel, while maintaining the cleanliness and speed of your original algorithm. I have also incorporated @jandren 's suggestion for global contrast.

I think both tools have their place: yours for targeted, flawless corrections, and this version for acting globally on the image’s relief. What do you think?

I suggest creating a dedicated post to present the technical details and avoid derailing this discussion.

The code is available on GitHub. GitHub - Christian-Bouhon/darktable at LC

I’m feeling tired, I’ll compile the appimage tomorrow morning.

Greetings from Lubéron,

Christian

Looking at the UI, would I be right in thinking this works like Contrast Equalizer, but in the scene-referred part of the pipeline and with sliders instead of the equalizer? In other words, your 5 sliders from top to bottom roughly equate to the 6 equalizer nodes from right to left?

Windows build with both versions of the LC modules for evaluation…

Take the usual precautions to running a dev version in parallel…

Hmmmm, does that mean we get two new modules which does more or less the same, but with a different GUI? I doubt, this is a good idea. I’m still not convinced by this equalizer GUI, but that’s not the point. Two modules with the same maths behind but with differnt GUIs is blowing up dt for no good reason. If it would use a different algorythm that would be a different story.

I think we should think of this as possible gui alternatives to use the same math

You mean as a setting (in a config file) to choose the GUI of my liking?

I take them now as proof of concepts, nothing is final at this point

Or as a dropdown to select different usages of the same algo.

Hello,

I don’t have time to recompile this morning because I haven’t found (or rather understood) a small bug. When we use a custom order, we can’t find the module. And yes, I’m an apprentice coder ;-).

As others have said earlier in this post, I would appreciate it if you didn’t start a philosophical discussion. Nothing has been done yet; it’s up to the darktable team and the author of this POC to decide.

https://drive.google.com/drive/folders/1zahcvlAyaN03L1VmX5esOOyhBkO8jiko?usp=sharing

PS: I am a French speaker and my second language is Dutch, so please excuse me if my comments are sometimes too direct or a little out of place.

Have a nice day,

Christian

I think these are just presented as alternatives, drafts, at the moment. I’m sure at most one of them (or one of any number of these alternatives) will be merged. I also guess one of them has a good chance to make it, as the feedback has been very positive.No idea, which one, though. ![]()

Thank you for giving us the opportunity to try such an approach out!

That’s an interesting point! Could you elaborate the (mathematical) motivation for preferring a norm that works in the same direction as the transformation done by the effect? Also, how to interpret the term “direction” with regard to scalar norms in this case?

If that’s the case, I got @Christian-B wrong. Making suggestions for alternatives is of course valid. And it’Ss for sure better if one can test this alternative.

I simply undestood it like:

Why don’t offer two modules with different UI.

Maybe because of the different module name

Just a voice from the maintainer, let’s not have two new modules sharing the same algorithm. We already have quite a lot of modules. We have Local Contrast already for display-referred, it is fine and a good move to propose a new module for the scene-referred workflow, but, please, not two new modules.