Hello, I’m the developer of Rapid Photo Downloader. If you use the program, or have used it in the past, I’d like to know what points of pain you have when using it, meaning things that annoy you or you wish were handled better.

Let me give an example: I use Rapid Photo Downloader to download photos from my DSLR, but it would be handy to download photos and videos from my smartphone too. The thing is, I’d like to download them to a different place than from my regular camera, with a different naming convention. It’s a real pain in the butt to have to reconfigure the naming and destination program preferences every time I do this.

Now is a good time for feedback. I’m currently working on the next version. The next version will use a completely different way of storing program preferences, so it’s a good opportunity to make changes that are different from what exists today.

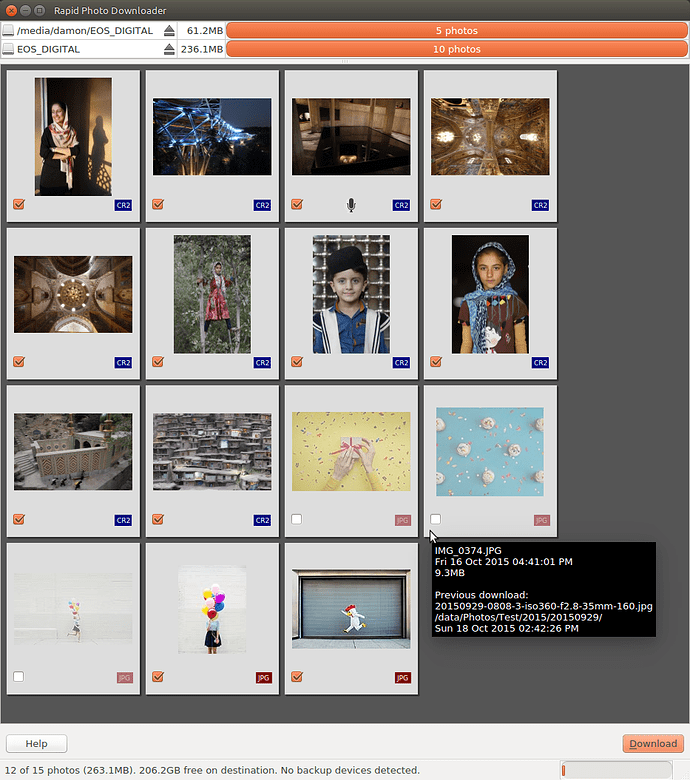

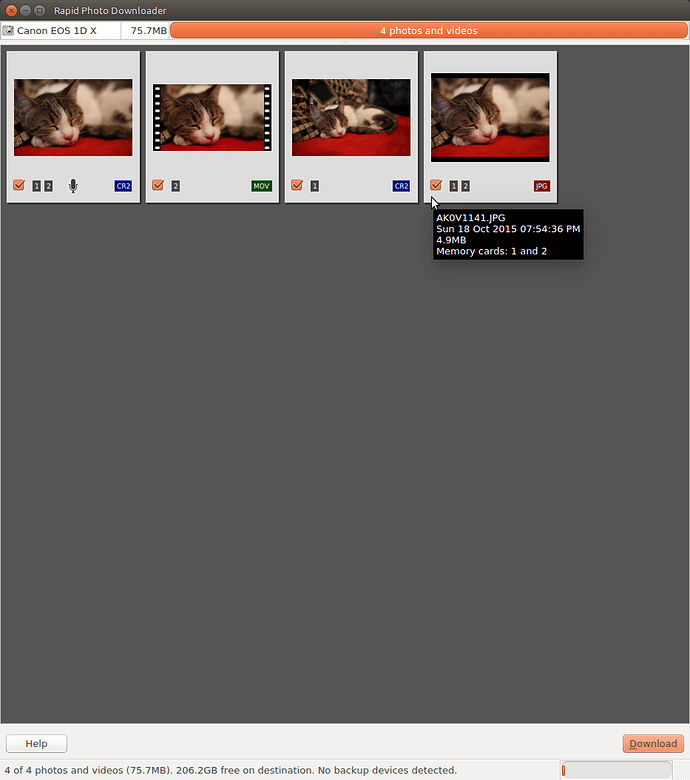

The next version already does some things better than the current release, although much of the user interface work is still to be done. Some screenshots from the most recent code:

This shows a mix files that have been downloaded a previous time and ones that have never been downloaded. Previously downloaded photos are dimmed. The third photo has an audio file associated with it (on some cameras you can record a little audio note - very handy).

This shows files from a camera with dual memory cards. The emblems indicate which card the files were found on. In this example, I set my camera to record photos to both cards simultaneously. I then deleted the third photo, which deletes it from the primary memory card, but not he secondary (also very handy at times!). The movie file was saved only to memory card 2.

For more detail on what has changed since the last release, I’ve copied and pasted from the ChangeLog:

New features:

- Refreshed user interface, with bigger thumbnails and easier visual

identification of different file types. - Download from all cameras supported by gphoto2, including smartphones:

- You can download from multiple cameras/smartphones simultaneously.

- Please note Rapid Photo Downloader will automatically unmount the camera/

smartphone so it can gain exclusive access to it using gphoto2. If you

attempt to mount it in another application (e.g. Gnome Files) while Rapid

Photo Downloader is accessing it, the download could be interrupted. - Please also note that the phone should be unlocked before running Rapid

Photo Downloader, or else it will be inaccessible.

- Remember files that have already been downloaded. You can still select

previously downloaded files to download again, but they are unchecked by

default, and their thumbnails are dimmed so you can differentiate them from

files that are yet to be downloaded. - Cache thumbnails generated when a device is scanned, making thumbnail

generation quicker on subsequent scans. - Cache photos and videos on cameras and phones used to generate thumbnails,

speeding up their download if they are downloaded in the same session. - Change the order in which thumbnails are generated, so that

representative samples (based on time) are prioritized. This is helpful when

downloading thousands of files at a time or over slow USB 2.0 connections. - Generate freedesktop.org thumbnails for downloaded files, which means

RAW files will have thumbnails in programs like Gnome Files and KDE Dolphin. - Added progress bar when running under a Unity desktop

Removed feature:

- Rotate Jpeg images - to apply Lossless rotation, this feature requires the

program jpegtran. Some users reported jpegtran corrupted their jpegs’

metadata. To preserve file integrity, unfortunately the rotate jpeg option

must be removed.

Switch to:

- PyQt 5.4, from PyGtk 2

- Python-gphoto2 to download from cameras, from Gnome GIO

- Python 3.4, from Python 2.7

- ZeroMQ for interprocess messaging, from python multi-processing

- GExiv2 for photo metadata, from pyexiv2

- Exiftool for video metadata, from kaa-metadata and hachoir-metadata

Missing features, still to be developed:

- Program configuration (preferences) cannot yet adjusted though the Graphical

User Interface (GUI). However they can be adjust manually using a text

editor. - Clicking the eject icon by a device currently does nothing.

- There is no error log window.

- The GUI will not prompt for a Job Code before downloading.

- Most menu items do nothing.

Thanks in advance for any feedback.

Best,

Damon

.

.

.

.