I am starting from real spectra of which i can compute the projection on the 1931 cmfs and the projections on the film sensitivities (ground truth). then i am computing XYZ with the real spectra and upsampling using your algorithm. i get a spectrum with zero error XYZ values when reprojected on the 1931 cmfs, but inevitably will give big errors on the film sensitivities.

the reason is exactly the nature of the sigmoid spectra that have uv/ir lobes if “dip” type. but can extend in near uv and near ir even if “peak” at the edges of the visible range.

here comes the idea of an optimized bandpassed upsampling that can reduce the round trip error with the real spectrum exposures.

then i am very gulty! i tried to go past the optimal per channel bandpass in the weekend.

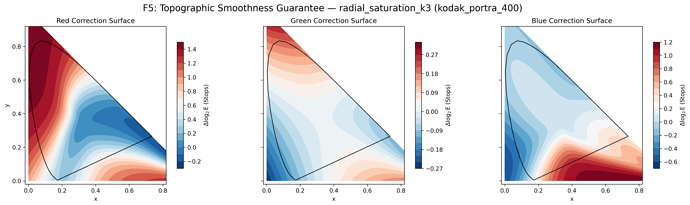

i would say it makes visual difference, and i think that it is very noticeable from no-bandpass to bandpass. and it can reach almost visual imperceptible difference (average max errors <2/20 ev for more half of the corpus, and <3/20 ev for 90+%). the correction adds a “simple” per channel parametric exposure correction map in the xy plane (tc coord.).

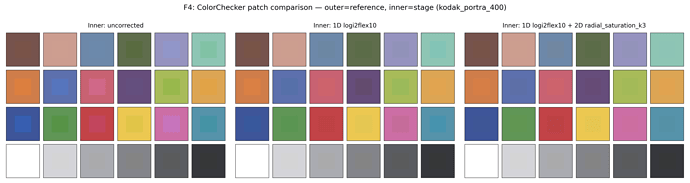

here an example with a colorchecker reflectance dataset using D55 illuminant, and projected on kodak_portra_400 sensitivities. the outer square are the real reflected spectra exposures (visualized as straight sRGB, so not the real colors, but it helps seeing the differences).

(left) uncorrected (hanatos2025 spectra), (center) bandpassed, (right) band passed and per channel exposure correction.

the results are quite ok, even if it is a correction procedure, thus intrinsically not very elegant. i see it as a sensitivity-adaptation of the original algorithm. since sensitivities are not so different from cmfs, the adaptation can be encoded in 15-20 parameters per channel (parameters of the bandpass and of the 2d surface smooth function). the benefit is also that it seems we keep some of the good qualities of your underling sigmoid alg, ie it has a smooth solution across the xy plane.

here an example of a fitted 2d function on the xy plane. i am using saturating functions so it is arbitrarily bounded to the range of maximum correction we want allow.

here also some plots of log exposure errors of a few spectral dataset from colour-science. first column is just hanatos2025 roundtrip error, center is just bandpass, and right is bandpass + surface correction.

we cannot expect a perfect planar pancake because the metameric spaces are supposed to be slightly different. but we can compress it in a minimal sense.

overall the bandpass is shared, cheap and easy compute, and the three exposure corrections are also not expensive to compute. it is a dirty solution but seems to work ok-ish and does not require to ship a new lut of the sigmoid spectra in triangular coordinates for every stock. but it is still a correction and might not make people feel clean

working with RGB from raw files seems the logical portable standard, even if in the way above implies camera sensitivity exposure -> rgb -> spectra -> sensitivity adaptation -> film sensitivity exposure; but it stays agnostic of the camera sensitivity (we trust the manufacturers/calibrators that the rgb of raw files are good estimates).

anyway the error above are against real spectra so it shows that the procedure, although not elegant is compact in amount of parameters and kinda working in the real world.

in analog cameras, lenses have uv absorption and will gently band pass the near uv region, the near ir is more open. film might have also color filter but this is already an effect included in the sensitivities (that are still density measurment on the effective photo process after all).

but in this case the window takes care of the overshooting of the lobes of the sigmoid spectra compared to real ones, tha’s it. the “dip” simgoid spectra are a particular kind of metamers with huuuuuge non visible contribution (even xrays  ). the bandpass just tame those to mimic the average behavior of real spectra corpus. essentially we are injecting the trends of the corpus in the bandpass and 2D surface, hoping that the simple bandpass+surface model can generalize in a handfull of parameters the sensitivity-adaptation-trasnform of the upsampling algorithm (with low enough error).

). the bandpass just tame those to mimic the average behavior of real spectra corpus. essentially we are injecting the trends of the corpus in the bandpass and 2D surface, hoping that the simple bandpass+surface model can generalize in a handfull of parameters the sensitivity-adaptation-trasnform of the upsampling algorithm (with low enough error).

if we wanted to optimize the sigmoid spectra in a camera sensitivity agnostic way (thus starting from RGB → XYZ), i guess the procedure would still end up relying on a spectral dataset to minimize the round trip error the upsampled spectra on film sensitivities while keeping an assigned XYZ to the orginal spectra. this because we would not have any ground truth for the film exposures.

but this is not my field and i might be taking huge assumptions that are wrong

![]() One day I still hope to see Spektrafilm in darktable…

One day I still hope to see Spektrafilm in darktable…