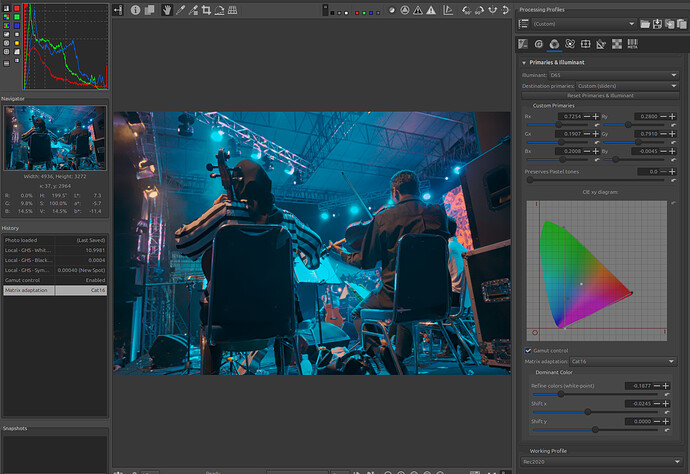

This third tutorial aims to explain the concept of a ‘Game changer’, with an example using an image of a show with LED lighting and visible spotlights.

In this tutorial, we will see how to use ‘Capture Sharpening’ , ‘Gamut compression’, ‘Selective Editing > Generalized Hyperbolic Stretch’ (GHS), and ‘Abstract Profile’ (AP) together. Of course, other tools are necessary, which we will cover later.

Image selection:

Raw file : (Creative Common Attribution-share Alike 4.0)

This image is very difficult to grasp, especially if you haven’t seen the show. What colors does the viewer perceive, and how can they be reproduced? Since I don’t know them, what follows is only a series of hypotheses. This process must be seen as a path, not a solution.

Learning objective:

The user will have assimilated the ‘Game changer’ concepts presented in the first and second tutorial:

-

The role of GHS, in the linear portion of the data, which can be considered a ‘Pre-tone-mapper’, and the role of Abstract Profile, which prepares the data for use in the output (screen, TIFF/JPG).

-

The role of Capture Sharpening to reduce noise in the flat areas of the image, and of course sharpening

-

The main objective is to demonstrate (at least partially) the use of the new ‘Gamut compression’ tool associated with GHS and Abstract Profile (Primaries)

Teaching approach:

- The lack of easily accessible and up-to-date documentation hinders this presentation, but we will manage without it (or almost). Here a link to Hugo documentation currently being developed.

Gamut compression Hugo

The issue of out-of-gamut or anecdotal colors, due, for example, to LEDs:

- To provide some background and help you understand, I’ve included two links (which I already shared during the Gamut Compression presentation in September 2024 ).

Generally speaking, apart from very specific cases, such as the image in this tutorial, the goal of ‘Gamut compression’ is to fit colors into the gamut (for example, the screen’s gamut), while preserving the original working profile for basic processing.

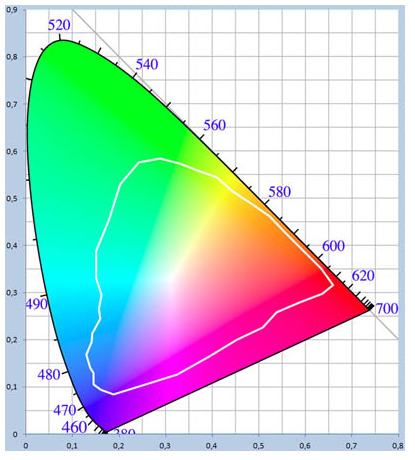

We use the principle of ‘Pointer’s gamut,’ which, within the set of colors perceived by humans (CIExy diagram), corresponds to reflected colors. This includes all cases where there is no light source in the image (sun, incandescent, LED, etc.), which is still the majority of cases.

We had a debate during the development of the code and the Pull Request. Should we provide base values for each working profile (ProPhoto by default, but some choose Rec2020, Adobe RGB, etc.) and the target compression gamut? What are the default values (which need adjusting) for ‘Threshold’ and ‘Maximum Distance Limit’? Given the complexity and uncertainties (especially when light sources are present in the image, or artificial colors are used), we chose to leave the default settings, which are set for Aces AP0 and Aces AP1.

In the linked documentation, you will find the settings (approximate) I determined through numerous tests for ‘Working Profile = ProPhoto’ and the six available ‘Target Compression Gamut’ options.

- I will attach a single (pp3) containing all the settings provided as a guide (at the end), regarding the referenced image, the settings are often arbitrary, as I have no idea, having never witnessed the actual colors perceived by the audience. Furthermore, my screen is very low-end and small… So consider this a starting point, not a final destination.

First step: Capture Sharpening

-

Disable everything, switch to ‘Neutral’ mode

-

In the ‘White Balance’ (Color Tab), Leave it on ‘Camera’ in the face of ignorance

-

Set the working profile to ‘Rec2020’, because ‘Prophoto’ already contains imaginary colors and is at the limits of the CIExy color space, allowing virtually no retouching.

-

Enable ‘Capture Sharpening’ (Raw tab).

-

Verify that ‘Contrast Threshold’ displays a value other than zero

-

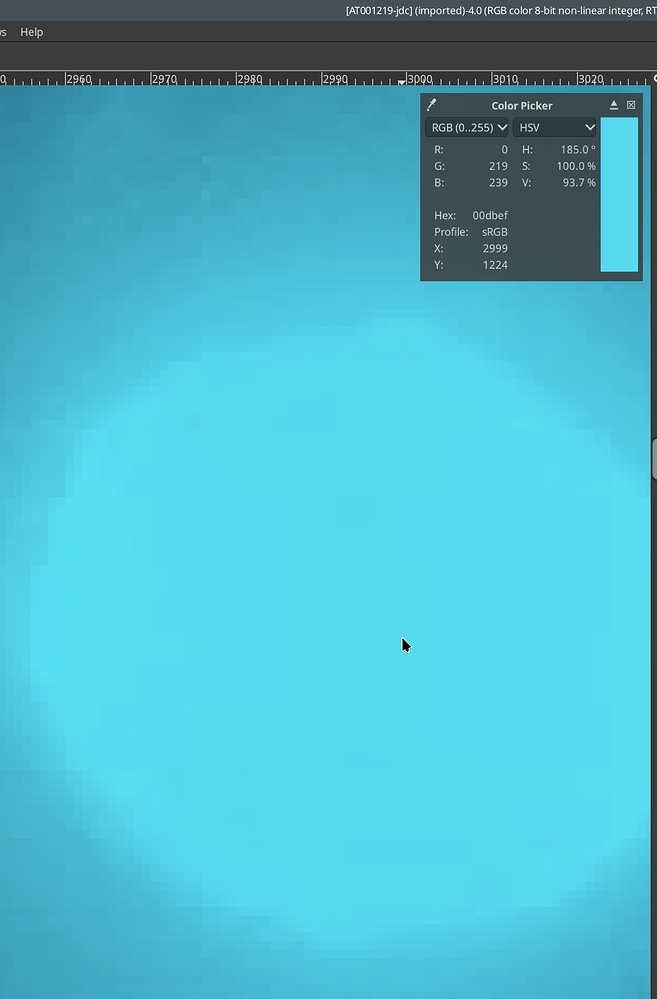

At 100% or 200%, you will see noise appear in the background.

-

Enable ‘Show contrast mask’, which is also insensitive to the Preview position. The noise on the black background becomes visible. I’m not going to repeat what was done in tutorial #2.

Remove noise on flat areas

-

Disable the mask.

-

View the image at 100% or 200%, then adjust the ‘Postsharpening denoise’ setting, which will take the mask information into account to process the noise. Adjust this denoising to your liking.

Second step: Gamut compression

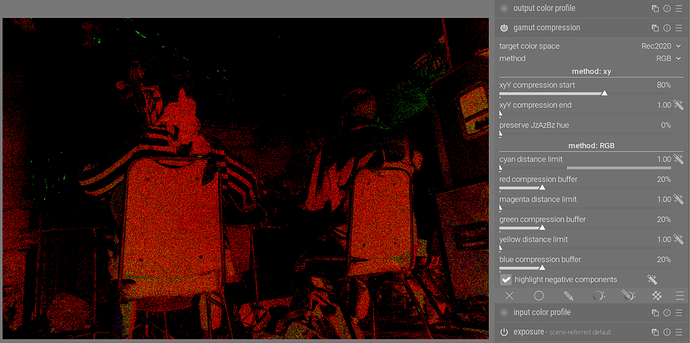

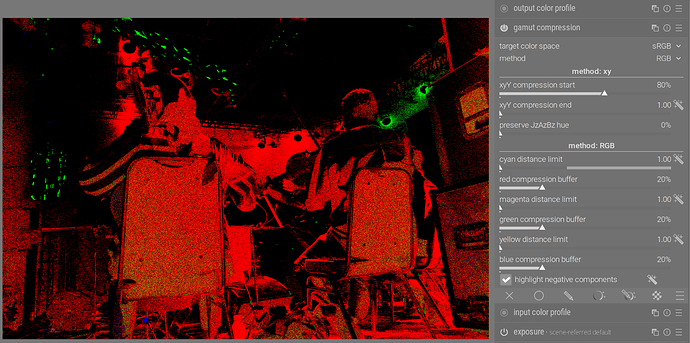

This step is necessary even with less-than-ideal settings to intervene before GHS and show you the impact of the former. You will see, by enabling or disabling Gamut compression, the minimal impact on the maximum White Point (WP linear) and ‘RGB values’ in ‘GHS’.

I set it to DCI-P3, which could be considered a high-end screen (which I don’t own). Note that ‘gamut compression’ doesn’t take into account the gamma of the output profile.

Choose the settings I suggested in the documentation for DCI-P3; this should only be considered as a starting point: Threshold Cyan=0.40, Threshold Magenta=0.87, Threshold Yellow=0.92, Limits Cyan=1.08, Limits Magenta=1.20, Limits Yellow:1.26

The (probable) reason for using cyan, magenta, and yellow rather than RGB values is that they correspond roughly, for the Pointer’s Gamut, to the circle inscribed in the CIExy diagram, and therefore allow us to act directly in that direction… We will see later how to do this (or at least what I propose).

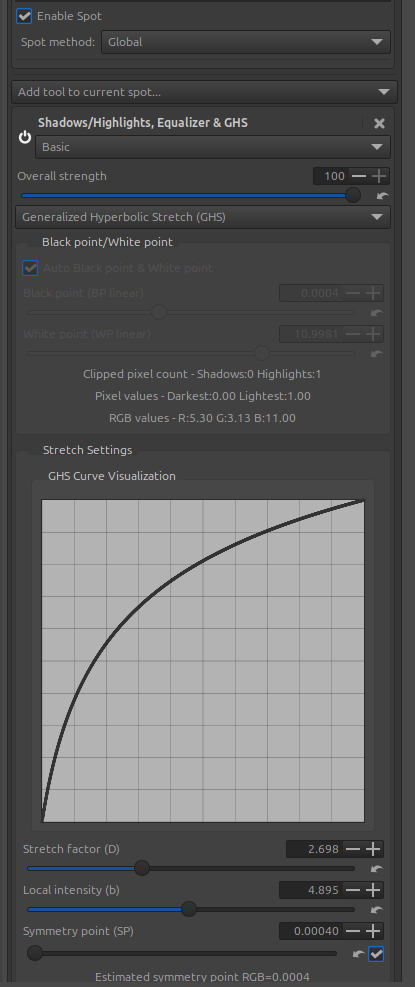

Third step: Generalized Hyperbolic Stretch - GHS

The goal of this step is to adjust the White Point (WP linear) and Black Point (BP linear), and at a minimum, to adjust the image contrast.

I proceeded in two steps (2 RT-spots in Global mode)… The choices are fairly arbitrary.

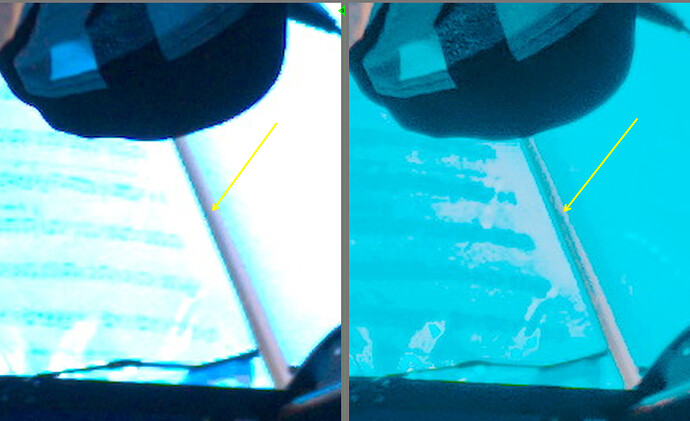

But look at the result of the first RT-Spot with GHS.

Note the enormous values of the White Point (WP linear) = 10.99 and the RGB values : R=5.30 G=3.13 B=11.00. We are completely out of gamut, outside the usual range. This is probably all due to the LED illuminant, those LEDs that are visible in the image, and to the camera’s Observer.

Fourth step: return to compression gamut

Add ‘Lockable color pickers’ to the image, especially on the LEDs.

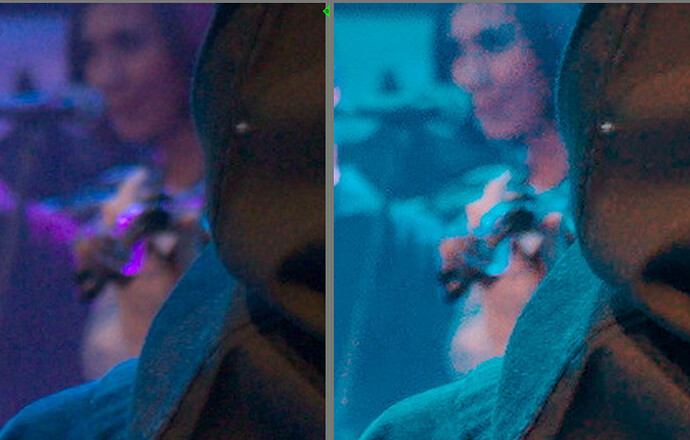

Change the settings to get a noticeable effect. Try reducing what I think is a drift (not sure because some LEDs might have been magenta) towards magenta.

The proposed settings (which I am not at all sure about) as you can see are very far from the basic ones : Threshold cyan=0.40, Threshold Magenta = 0.30, Threshold Yellow=0.92 ; Limits Cyan = 1.080, Limits Magenta=1.98, Limits Yellow=1.32, Power=1.70

At this stage we have achieved a significant reduction in colour drift, but not all of it.

To see the impact of ‘Gamut compression’ on GHS, try disabling and re-enabling GHS. Try setting ‘Stretch factor (D)’ to 0.001, disabling and enabling ‘Auto Black point & White point’, and enabling/disabling ‘Gamut compression’. You’ll see that there are indeed differences, but only slight ones, on the ‘WP linear’ and ‘BP linear’.

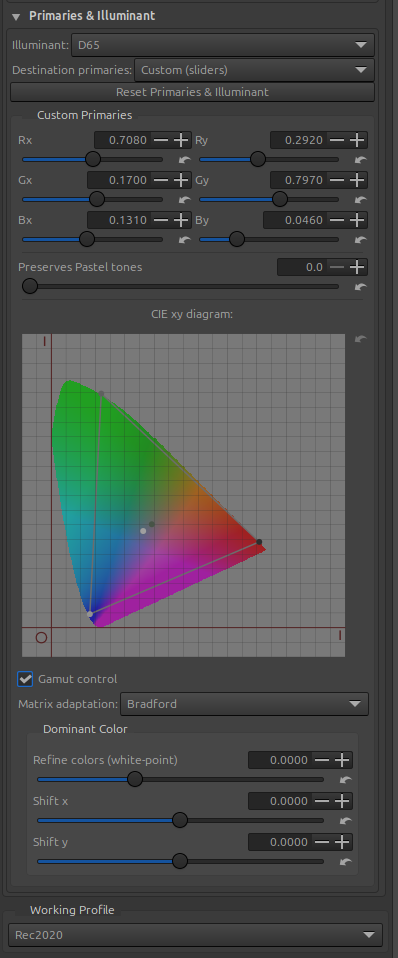

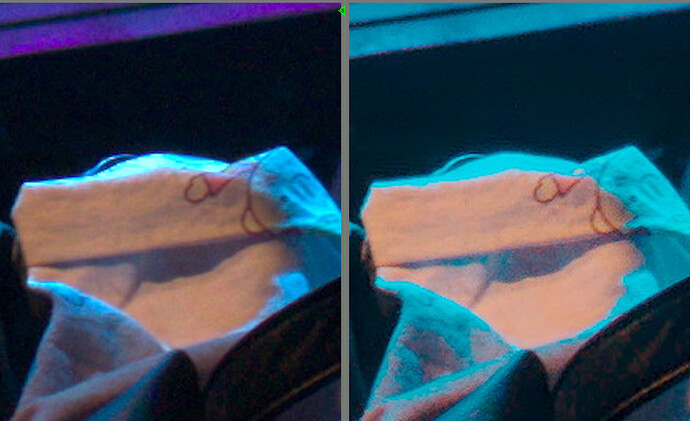

Fifth step - Abstract Profile - and the primaries

I first adjusted the ‘Gamma’, ‘Slope’, and ‘Contrast enhancement’ settings to make the image more pleasing (in my opinion). Of course, you can change these settings.

The important thing is to try and adjust the primary colors and dominant color to minimize color shifts.

Starting point for the module ‘Primaries & Illuminant’

Next, adjust the primaries with the 6 sliders, try using the ‘Gamut control’ and ‘Matrix adaptation’ checkboxes. It seems obvious that by modifying the primaries, there’s a significant risk (in fact, that’s the intention) of altering the color gamut. Try it out and see the effects on the image.

- Change the primaries - it’s not intuitive at all…

- Enable/disable ‘Gamut control’

- Try ‘Bradford’ instead of ‘Cat16’, etc.

- Modify the ‘Dominant Color’ settings.

If necessary, return to the ‘Gamut compression’ and ‘GHS’ settings…

Resulting image

Of course, there are other ways to do it, with less exotic solutions (GHS, Abstract Profile, etc.). The GUI could be improved… made more user-friendly, with modules grouped together (where possible). The pipeline needs modification (difficult). This could be done with RawTherapee tools or other software that offers better communication. I’m referring to the ‘game changer’ concept.

Thank you

Jacques

If you don’t want to read all the posts… here’s the latest pp3 I updated. It’s still not perfect, nor THE solution, but it’s a possible PATH forward (However, from a pedagogical standpoint, it’s better to read posts that explain ‘why’). This also implies using the last ‘commit’ 0dbfe85, or after…